In a comment to the previous posting, gmagee has asked why a superwave hitting the entire remnant would cause us to see a flare, given that the remnant is many light years in size. He wonders why the emission due to a brief rise in superwave cosmic ray intensity would not average out over the entire remnant, thus preventing us from seeing an intensity change lasting only a few days.

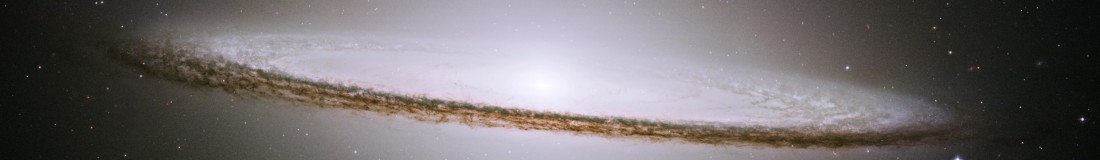

The reason is that we are seeing gamma radiation from a very small area of the Nebula. We don’t see the majority of the gamma ray emission radiated by the entire Nebula during a gamma ray flare; we only see the gamma emission that is directed precisely in our direction. In other words, this gamma emission is coherent synchrotron emission, rather than incoherent synchrotron emission. Consider that the cosmic rays producing this gamma emission are normally considered to have energies of between 1015 to 1016 ev. This means that the cosmic ray electrons have Lorentz factors (γ) of between 109 and 1010. Electrons of such high energy beam their gamma synchrotron emission in a narrow cone in the forward direction of their travel where the cone aperture has a half diameter of: θ = 1/γ radians = 10-9 – 10-10 radians. This equals just 0.2 to 0.02 milliarc seconds! So when these gamma rays are orbit around magnetic field lines encountered in the Crab’s magnetized plasma sheath, they will beam their radiation in a very limited direction which we will see only when those electrons are aimed towards us in their gyration orbit. A deviation of more than this angle and their radiation will be entirely invisible to us. The actual radiation cone that will be beamed out will be wider than this because the superwave cosmic rays impacting the Nebula will have some degree of angular dispersion. Let us say that the incident volley has an angular variation of 1 degree of arc. Then cosmic ray electrons spiralling around Nebula field lines a short distance away which beam their radiation, let us say two degrees away from our line of sight will be totally invisible to us.

If you calculate the depth of the Nebula in the line of site (considering that the superwave cosmic rays are travelling away from us toward the Galactic anticenter) then this calculates to d = r (1 – cos θ), where r = 4 light years, the approximate radius of the Nebula. For θ = 1 degree this calculates to 0.0006 ly, or a depth of 0.2 light days. So variations in cosmic ray intensity lasting 1 light day or more should certainly be reflected in gamma ray intensity variations of comparable duration.

In 1977 W. Kundt wrote that the incoherent synchrotron emission can only account for ~1% of the optical luminosity in the Crab Nebula’s wisp region and neither can it account for the 1% fraction of the optical emission which is circularly polarized. This led him to propose that the Crab Nebula was being energized by a cosmic ray “beam” that was producing coherent synchrotron emission, the mechanism we are proposing above. In particular, he proposed that the emission was being produced by stimulated synchro-Compton emission being beamed toward the observer. As I pointed out in 1983 (p.177 – 179 of my Ph.d. dissertation), this emission is most likely stimulated by superwave cosmic rays propagating along our line of sight toward the Galactic anticenter and impacting the Crab Nebula. The pulsar is an unlikely origin for the beamed emission comiing from the wisps since the vector from the pulsar to the wisps makes a large angle with respect to our line of sight. The superwave source is left as the more plausible alternative.

Kundt, W. “The Wisps in the Crab Nebula: A Cosmic Laser?” Astronomy and Astrophysics 60 (1977):L19.

Are we seeing flaring activity from a localized region of the nebula, where particularly strong magnetic fields in the nebula are properly aligned to emit synchrotron radiation in our direction in response to the passing of a intense part of the superwave? And if so, would not this region be captured by Chandra? Or are we seeing a summation of various regions, each experiencing a particularly intense moment of the superwave simultaneously with respect to our line of sight?

Finally, some have wondered why other nearby nebula would not be similarly affected? I suspect the answer is that the superwave duration is too short in time, such that the Crab is the only one exposed at the moment? Indeed, can we conclude a minimum time that the Crab has been exposed to the superwave? It was discovered in 1731, so likely at least several hundred years.

The superwave is coming, although the time period may be as soon as next week and as far away as a few years (10-25) – at least according to Disclosure Letters my father left me concerning his time at NASA.

There are many known factors to this on the inside, but twice as many unknowns in this regard.

I have great respect for your work, LaViolette, and am writing a book of my own pertaining to the inclusion of a second star (brown dwarf) into our (local) solar system as well as the fact that the galactic center “burps” every 22 thousand years and once one hits, another is halfway here (which would correspond somewhat to your theory that currently “more than one are headed this way”.

If you would like to share some information, I would love to discuss with you my fathers letters as I believe you would benefit from them.

Best regards,

Eric Anthony Crew

http://www.aquarianphilosophy.com