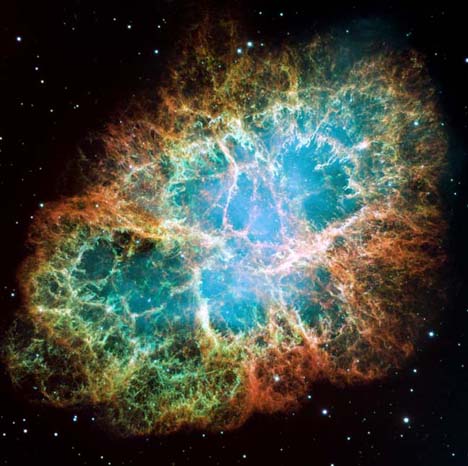

In his 1983 Ph.D. dissertation, Paul LaViolette presented the novel theory that most of the radiation coming from the Crab Nebula is not due to cosmic ray emission coming from the Crab pulsar, but rather is produced by a cosmic ray electron volley (a galactic superwave) that is currently propagating toward the galactic anticenter and impacting the remnant face on. He theorized that these superwave cosmic rays are currently being captured by the magnetized plasma forming the Crab remnant which causes them to emit synchrotron radiation, thus illuminating the nebula.

Recent observations of the occurrence of gamma ray flares in the nebula help support LaViolette’s theory. Measurements made with the Fermi Gamma Ray Space Telescope have shown that in February 2009 the Crab nebula gamma ray intensity rose by a factor of four over a 16 day period before subsiding back to background levels. Also on September 2010 its gamma ray intensity rose six fold over a 4 day period. Details of this are reported in the February 2011 issue of Science magazine.

The cosmic rays producing this gamma emission have such high energies that they cannot travel further than 0.1 light years. So if the cosmic rays had originated from the Crab pulsar, all of their emission would have had to come from a region 0.2 light years in diameter centered on the pulsar. However, the Crab pulsar may be ruled out as being the source of these cosmic rays since observations with the Jodrell Bank radio telescope have shown that during these flares there was no change in the pulsar’s radio flux intensity, pulse shape, or rate of pulse period increase; see report in the Astronomer’s Telegram.

Furthermore the astronomers who studied these flares were puzzled by the finding that these gamma flares were necessarily produced by cosmic ray electrons having energies of 10 quadrillion (10 million billion) electron volts. These were the highest energy cosmic rays ever observed that were able to be traced to a specific astronomical object. The problem is that astronomers have no idea how a pulsar, like that associated with the Crab nebula, could have accelerated cosmic ray electrons to such a high energy, and have accomplished this acceleration so rapidly. They say the discovery challenges all theories about how cosmic ray particles are accelerated (see story in Science Daily).

This mystery is easily solved if the cosmic rays producing this gamma ray emission originated from the energetic cosmic ray source residing at the center of our galaxy and are part of a superwave currently impacting the Crab remnant, as suggested by LaViolette. The flare would indicate that we happened to observe the remnant at a time when it was being impacted by a higher than normal density of ultra relativistic galactic cosmic ray electrons. As noted from observations of active galactic nuclei, cosmic ray emission intensities can vary considerably. With this model, the relativistic electrons producing this gamma emission would not be coming from a point source in or near the nebula but would be entering diffusely over the entire nebula. In the standard explanation, the high energy electrons would be limited to a 0.2 light year diameter region centered on the pulsar before exhausting all of their energy. According to the superwave theory, these electrons would produce emission covering a much larger region most probably centered on the remnant’s X-ray emission region that would not necessarily be centered on the pulsar. It would most likely coincide with the X-ray emission that is located to one side of the pulsar.

The other unusual finding reported lately is that the Crab Nebula has been gradually dimming. Sandberg and Sollerman have found that the optical and infrared emission from the Crab Nebula has been gradually declining in intensity by about 0.7 ± 0.4 % per year (2.9 ± 1.6 mmag/yr) over the past 20 years. From 2006 to 2009 an even larger increase of about 2% per year is indicated. Also more recently, besides the discovery of the gamma ray flares, scientists have found that the gamma ray emission intensity from the Crab Nebula has been declining quite rapidly. Observations with the Fermi gamma ray telescope indicate that since the summer of 2008 its gamma ray intensity has declined about 7%; see stories in e Science News and Before It’s News. In fact, they have found that its gamma emission has brightened and dimmed three times since 1999 on a timescale of about three years.

Astronomers have been puzzled by this variability since the standard theory predicts that the Crab pulsar radio intensity should show a corresponding decline in intensity, or corresponding variations, but as mentioned above, the pulsar’s intensity instead remains relatively constant. The superwave theory is not similarly troubled by such intensity variations. In fact, long-term changes in intensity would be entirely expected as the superwave propagates through the remnant. Eventually, sometime perhaps within the next millennium or so, after the superwave has completely passed through the nebula on its journey away from the GC, the Crab nebula will cease to shine as it now does. Its source of illumination will essentially be shut off.

Is the expanding ring recently found around the pulsar not associated then with the superwave? Is this separate unrelated phenomenon? It would seem to be related to the pulsar itself, rather than to an impacting superwave not likely centered exactly on the pulsar?

http://www.physorg.com/news/2011-05-crab-nebula-action-case-dog.html

There are some misconceptions about the placement of the Crab pulsar that are being circulated both in science journal papers and on media news websites that need to be corrected. So to properly answer Garth’s comment will require a rather extended discussion which will be done in a responding posting on this subject.

Crab nebula emission decline over past two years at ‘hard’ energy levels.

http://www.physorg.com/news/2011-01-high-energy-constant-crab-nebula-video.html